PyTorch + ONNX Runtime = Production

Deploy anywhere

Run PyTorch models on cloud, desktop, mobile, IoT, and even in the browser

Boost performance

Accelerate PyTorch models to improve user experience and reduce costs

Improve time to market

Used by Microsoft and many others for their production PyTorch workloads

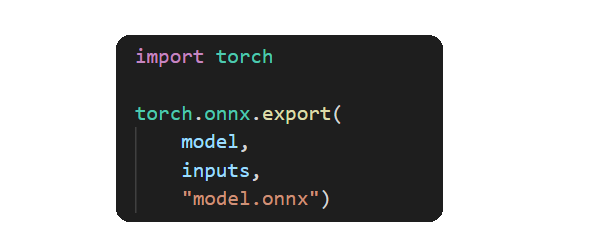

Native support in PyTorch

PyTorch includes support for ONNX through the torch.onnx APIs to simplify exporting your PyTorch model to the portable ONNX format. The ONNX Runtime team maintains these exporter APIs to ensure a high level of compatibility with PyTorch models.

Python not required

Training PyTorch models requires Python but that can be a significant obstacle to deploying PyTorch models to many production environments, especially Android and iOS mobile devices. ONNX Runtime is designed for production and provides APIs in C/C++, C#, Java, and Objective-C, helping create a bridge from your PyTorch training environment to a successful PyTorch production deployment.

Lower latency, higher throughput

Better performance can help improve your user experience and lower your operating costs. A wide range of models from computer vision (ResNet, MobileNet, Inception, YOLO, super resolution, etc) to speech and NLP (BERT, RoBERTa, GPT-2, T5, etc) can benefit from ONNX Runtime's optimized performance. The ONNX Runtime team regularly benchmarks and optimizes top models for performance. ONNX Runtime also integrates with top hardware accelerator libraries like TensorRT and OpenVINO so you can get the best performance on the hardware available while using the same common APIs across all your target platforms.

Get innovations into production faster

Development agility is a key factor in overall costs. ONNX Runtime was built on the experience of taking PyTorch models to production in high scale services like Microsoft Office, Bing, and Azure. It used to take weeks and months to take a model from R&D to production. With ONNX Runtime, models can be ready to be deployed at scale in hours or days.